What is Backpropagation?

Backpropagation is the essence of neural network training. It is the method of fine-tuning the weights of a neural network based on the error rate obtained in the previous epoch (i.e., iteration). Proper tuning of the weights allows you to reduce error rates and make the model reliable by increasing its generalization.

Backpropagation in neural network is a short form for “backward propagation of errors.” It is a standard method of training artificial neural networks. This method helps calculate the gradient of a loss function with respect to all the weights in the network.

How Backpropagation Algorithm Works

The Back propagation algorithm in neural network computes the gradient of the loss function for a single weight by the chain rule. It efficiently computes one layer at a time, unlike a native direct computation. It computes the gradient, but it does not define how the gradient is used. It generalizes the computation in the delta rule.

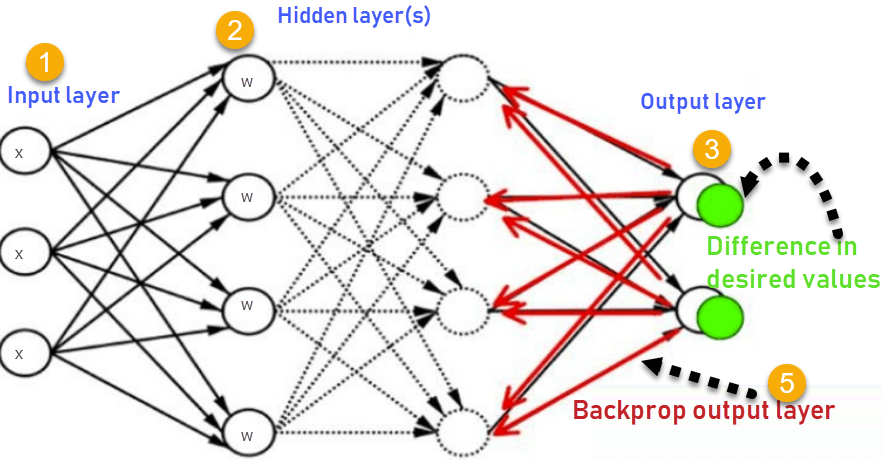

Consider the following Back propagation neural network example diagram to understand:

- Inputs X, arrive through the preconnected path

- Input is modeled using real weights W. The weights are usually randomly selected.

- Calculate the output for every neuron from the input layer, to the hidden layers, to the output layer.

- Calculate the error in the outputs

ErrorB= Actual Output – Desired Output

- Travel back from the output layer to the hidden layer to adjust the weights such that the error is decreased.

Keep repeating the process until the desired output is achieved

Why We Need Backpropagation?

Most prominent advantages of Backpropagation are:

- Backpropagation is fast, simple and easy to program

- It has no parameters to tune apart from the numbers of input

- It is a flexible method as it does not require prior knowledge about the network

- It is a standard method that generally works well

- It does not need any special mention of the features of the function to be learned.

What is a Feed Forward Network?

A feedforward neural network is an artificial neural network where the nodes never form a cycle. This kind of neural network has an input layer, hidden layers, and an output layer. It is the first and simplest type of artificial neural network.

Types of Backpropagation Networks

Two Types of Backpropagation Networks are:

- Static Back-propagation

- Recurrent Backpropagation

Static back-propagation

It is one kind of backpropagation network which produces a mapping of a static input for static output. It is useful to solve static classification issues like optical character recognition.

Recurrent Backpropagation

Recurrent Back propagation in data mining is fed forward until a fixed value is achieved. After that, the error is computed and propagated backward.

The main difference between both of these methods is: that the mapping is rapid in static back-propagation while it is nonstatic in recurrent backpropagation.

History of Backpropagation

- In 1961, the basics concept of continuous backpropagation were derived in the context of control theory by J. Kelly, Henry Arthur, and E. Bryson.

- In 1969, Bryson and Ho gave a multi-stage dynamic system optimization method.

- In 1974, Werbos stated the possibility of applying this principle in an artificial neural network.

- In 1982, Hopfield brought his idea of a neural network.

- In 1986, by the effort of David E. Rumelhart, Geoffrey E. Hinton, Ronald J. Williams, backpropagation gained recognition.

- In 1993, Wan was the first person to win an international pattern recognition contest with the help of the backpropagation method.

Backpropagation Key Points

- Simplifies the network structure by elements weighted links that have the least effect on the trained network

- You need to study a group of input and activation values to develop the relationship between the input and hidden unit layers.

- It helps to assess the impact that a given input variable has on a network output. The knowledge gained from this analysis should be represented in rules.

- Backpropagation is especially useful for deep neural networks working on error-prone projects, such as image or speech recognition.

- Backpropagation takes advantage of the chain and power rules allows backpropagation to function with any number of outputs.

Best practice Backpropagation

Backpropagation in neural network can be explained with the help of “Shoe Lace” analogy

Too little tension =

- Not enough constraining and very loose

Too much tension =

- Too much constraint (overtraining)

- Taking too much time (relatively slow process)

- Higher likelihood of breaking

Pulling one lace more than other =

- Discomfort (bias)

Disadvantages of using Backpropagation

- The actual performance of backpropagation on a specific problem is dependent on the input data.

- Back propagation algorithm in data mining can be quite sensitive to noisy data

- You need to use the matrix-based approach for backpropagation instead of mini-batch.

Summary

- A neural network is a group of connected it I/O units where each connection has a weight associated with its computer programs.

- Backpropagation is a short form for “backward propagation of errors.” It is a standard method of training artificial neural networks

- Back propagation algorithm in machine learning is fast, simple and easy to program

- A feedforward BPN network is an artificial neural network.

- Two Types of Backpropagation Networks are 1)Static Back-propagation 2) Recurrent Backpropagation

- In 1961, the basics concept of continuous backpropagation were derived in the context of control theory by J. Kelly, Henry Arthur, and E. Bryson.

- Back propagation in data mining simplifies the network structure by removing weighted links that have a minimal effect on the trained network.

- It is especially useful for deep neural networks working on error-prone projects, such as image or speech recognition.

- The biggest drawback of the Backpropagation is that it can be sensitive for noisy data.